Eventscripts parallel processing5/26/2023

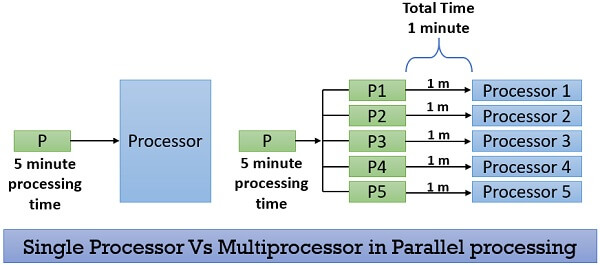

Edit your custom shared library to add your throttling function which might look like:Ī) This (simple first in, first out, with an option parameter for the max number of concurrent jobs) def AllowDataImport(loadid, api, totalMax = 10):ī) Or this (slightly more complex first in, first out but which prevents a DLR from running more than once at a time, with an optional parameter for the max number of concurrent jobs) def AllowDataImport(loadid, api, totalMax = 10):.While mainLib.AllowDataImport(fdmContext, fdmAPI) = False: #Wait until the process is allowed to continue Edit BefImport to check your throttling function for permission to run (I wait a random amount of time between 15 and 45 seconds, obviously you can change that according to your liking):.Edit BefImport to import your required libraries (note that I'm assuming you have some sort of custom shared library for all your jython goodies):.Once those are made you can get to work by following three simple steps: Then you need to have added a BefImport script for each of your target applications. Getting to the actual solution, first you need to have modified FDMEE and ODI to allow parallel processing. On and on it goes until it gets the green light. If not, then it waits a random interval of time before checking again. My implementation relies on the BefImport event scripts to check whether the current process should be allowed to run. For extra credit, I'll even include a few different versions of the code which allow different options/restrictions/configurations. So what's a consultant to do? Roll a custom throttling solution of course! And in fact, it turns out that it can be done pretty simply. FDMEE user guide, system settings, application settings, nope nothing there fits the bill. Not just within a batch, but across all batches.or manually executed DLRs.basically over the whole system. If only there was a way to control how many concurrent jobs FDMEE will allow. Having a lot of data flowing concurrently through your TDATASEG_T table, especially when there are a lot of mapping rules, can really gum things down. If you allow full bore parallel processing you can quickly find that its like a fire hose. You have the perfect plan save for one wrinkle, namely that need to limit total concurrent processing. Go into your ODI topology to allow parallel processing and you're all good.or mostly good. In those other cases where order is irrelevant, configure the batch to allow parallel processing. In cases where order matters, configure the batch to perform sequential processing. While on its face, the solution is pretty straightforward: create batches for these different processes. Have other processes that can run in parallel and should finish as quickly as possible.Have certain processes which need sequential loading.Accidentally execute the same batch two or more times in a row (maybe because they didn't wait long enough for the confirmation popup, perhaps due to some system latency, see the point above).Conspire against you by launching 50 batches in a short window, crippling your system performance.You need to tightly control the order and concurrency of your data loads? Sequential to the rescue! Oh wait now, you need to allow multiple loads to process at the same time? Parallel to the rescue! Oh wait again, neither of these solutions can handle users who do/expect the following: If you're like me, you've noticed (perhaps despairingly) that FDMEE settings can be a bit extreme.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed